AWS Adds Bedrock Tool to Migrate and Test Enterprise AI Prompts Across Models

Amazon Web Services has added a Bedrock feature meant for a problem that often appears only after an artificial intelligence application is already in production: the prompt that works on one model may not work the same way on another.

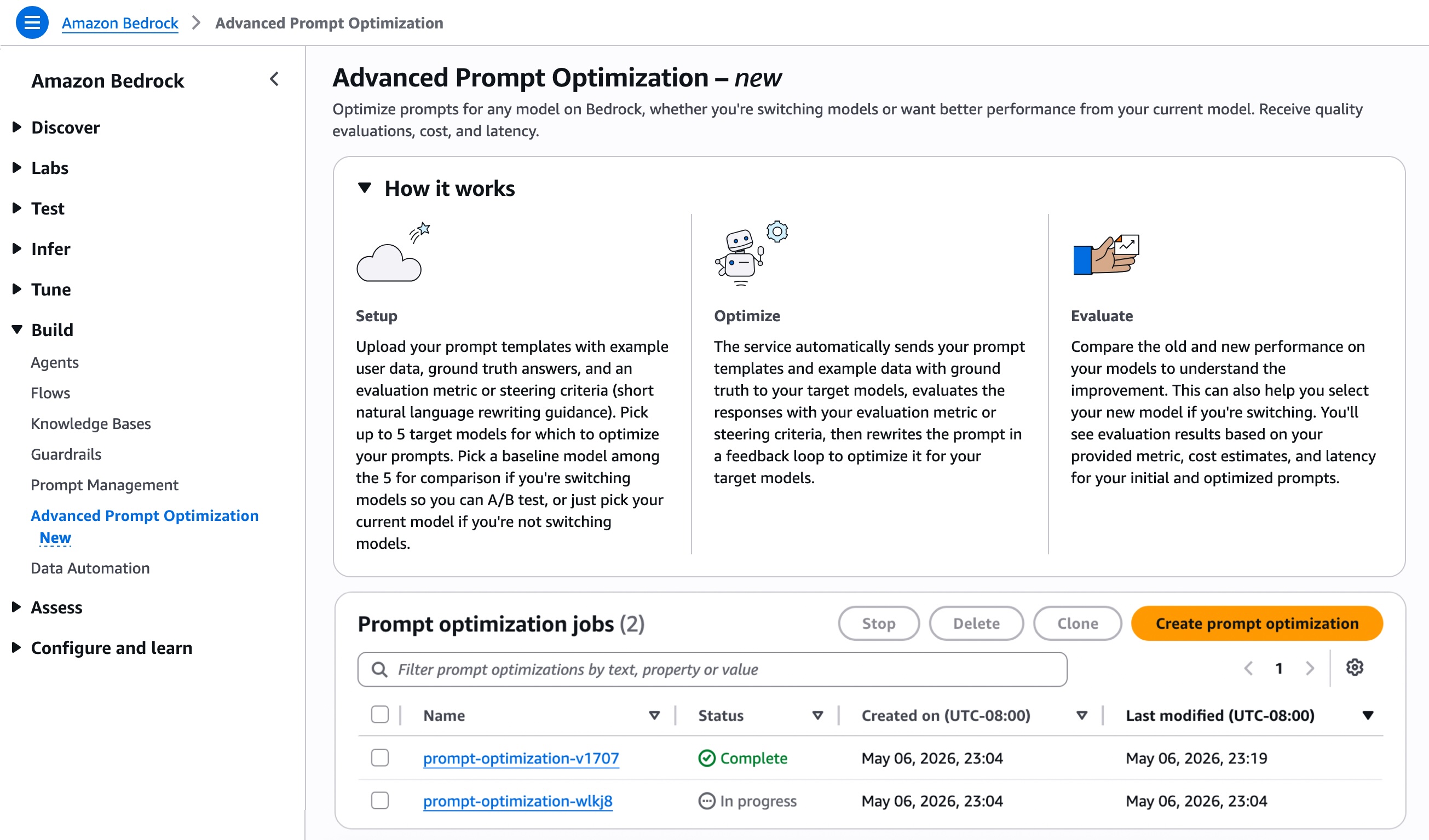

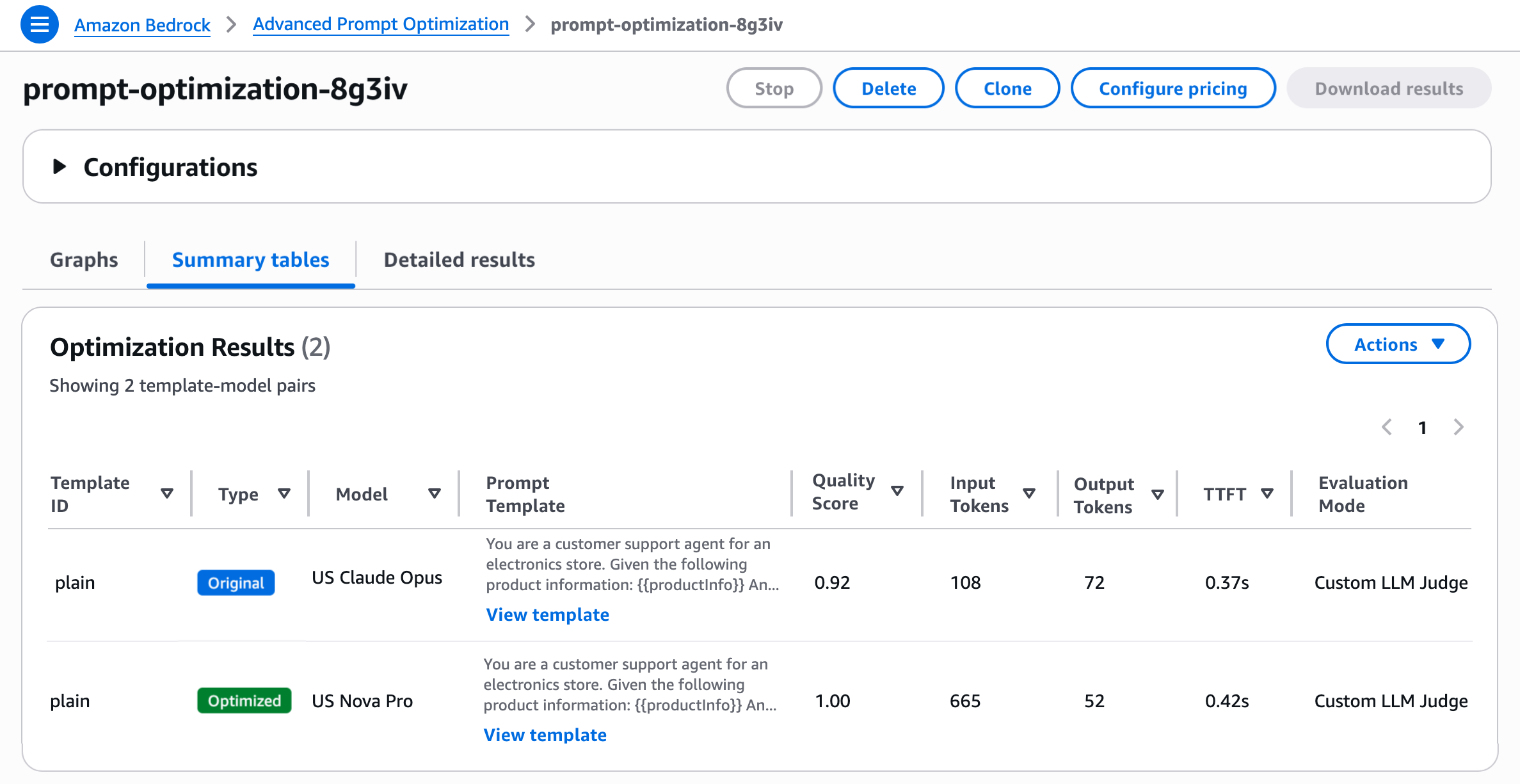

The company announced Amazon Bedrock Advanced Prompt Optimization, a managed workflow that lets customers optimize prompt templates for models available through Bedrock and compare the original prompt with rewritten versions across as many as five models at the same time. AWS says the tool can take prompt templates, sample user inputs, expected answers and an evaluation metric, then return final prompt templates, evaluation scores, cost estimates and latency.

The change is a technical product launch, but its importance is broader than a new prompt editor. AWS is trying to make model switching measurable inside Bedrock, rather than leaving enterprises to rebuild evaluation pipelines each time they want to test a different foundation model. That puts prompt migration, quality scoring and cost comparison into the same cloud control plane where companies already run model inference.

"Today, we’re announcing Amazon Bedrock Advanced Prompt Optimization, a new tool that you can use to optimize your prompts for any model on Amazon Bedrock, while comparing your original prompts to optimized prompts across up to 5 models simultaneously." - Amazon Web Services News Blog

For enterprise developers, the practical message is simple: model choice is no longer just a benchmark contest. It is a software maintenance issue. A prompt is part of the application. If a company changes the model underneath that application, the prompt can produce different formatting, omit important context, answer with the wrong level of detail or cost more to run. AWS is selling a way to test those tradeoffs before changing production behavior.

What AWS Added

Advanced Prompt Optimization is built around evaluation rather than simple rewriting. A customer starts with one or more prompt templates, adds example inputs that fill the variables in those templates, supplies ground truth answers and chooses how the optimizer should judge results. Bedrock then runs an asynchronous optimization job and shows how the original and optimized prompts perform.

AWS documentation says the advanced optimizer accepts as many as 10 prompt templates per job and as many as 100 example user inputs per prompt template. It also supports multimodal examples, including JPG, PNG and PDF inputs. That makes the feature relevant not only to chatbots, but also to workflows such as document extraction, image analysis and customer support systems that use mixed input types.

The user guide distinguishes this advanced workflow from a quick prompt cleanup. Simple prompt optimization is a faster heuristic rewrite for one short prompt. Advanced Prompt Optimization is closer to a small test harness. It lets customers compare the current model with candidate models, score the outputs and review cost and latency data.

"The optimizer takes your prompt templates (up to 10 per job), example user inputs for variable values (up to 100 per prompt template), ground truth answers, and an evaluation metric to guide the optimization." - Amazon Bedrock User Guide

The product also gives customers several ways to define quality. They can use a custom AWS Lambda function when the scoring logic is precise and programmatic. They can use an LLM as judge when a rubric is better suited to evaluating style, completeness or instruction following. They can also provide short natural language criteria to steer optimization.

That range matters because not every AI workload has the same definition of success. A support chatbot might need concise and policy compliant answers. A document analysis workflow might need exact extraction from a PDF. A coding assistant might need tests to pass. A single generic score would not capture all of those cases, so AWS is asking customers to bring the evaluation method that matches their application.

Why Prompt Migration Is Hard

Prompts look portable because they are written in ordinary language. In production systems, they behave more like configuration code. They embed assumptions about model behavior, output formatting, context windows, tool use, safety behavior and how much instruction the model needs to perform a task.

A prompt tuned for one model can become too vague on another. It can also become too verbose, increasing token cost without improving the answer. A model may follow a JSON formatting instruction strictly, while another may add explanatory text that breaks a downstream parser. A customer moving from one model family to another therefore faces more than a copy and paste exercise.

That is why AWS emphasizes comparison across models. The feature does not merely rewrite a prompt and declare it better. It lets customers define examples and expected answers, then inspect how the baseline and optimized prompts perform under selected scoring rules. The output includes cost estimates and latency, which are essential for production applications where a marginal quality gain may not justify a slower or more expensive request.

The important caveat is that the tool is only as useful as the evaluation set and metric behind it. If a customer supplies narrow examples or weak ground truth answers, the optimizer can still miss real world failures. AWS is providing the managed workflow, not a guarantee that every AI application will improve automatically.

The Bedrock Strategy

Bedrock is AWS's managed platform for building generative AI applications with a range of foundation models. The product page says Amazon Bedrock powers generative AI for more than 100,000 organizations worldwide, from startups to global enterprises. The new optimization tool fits that positioning because it treats model variety as a platform feature that needs management, testing and governance.

There is a tension inside that pitch. Bedrock gives customers access to multiple model options, which supports model optionality. At the same time, the evaluation and migration workflow keeps the surrounding tooling inside AWS. A company may gain flexibility across models while becoming more dependent on Bedrock as the place where those models are selected, tested and operated.

For AWS, that is a logical cloud strategy. As foundation models become more interchangeable for some enterprise tasks, the durable value can move to the layer that handles evaluation, routing, governance, data access, security and cost control. Prompt optimization is one piece of that layer. It helps turn a model marketplace into an operational platform.

The business stakes are visible in Amazon's own filing. In its Form 10-K for fiscal year 2025, Amazon said AWS sales increased 20 percent from the prior year, with growth primarily reflecting increased customer usage and partly offset by pricing changes tied largely to long term customer contracts. Bedrock features that deepen usage of AWS AI services connect directly to that growth engine.

Cost, Regions And Caveats

AWS says customers are charged based on the Bedrock model inference tokens consumed during optimization at normal per token rates. That makes pricing workload dependent. A job that tests many examples across several models can consume more tokens than a small one model cleanup. The pricing page also notes that Bedrock pricing depends on modality, provider and model, which means a universal price for this feature would be misleading.

The documentation also describes Advanced Prompt Optimization as asynchronous. Job duration can range from about 15 minutes to hours depending on the number of prompt templates and samples. That timing is reasonable for a migration or regression testing workflow, but it is not the same as an instant prompt suggestion inside an editor.

Availability is broad but not global. AWS says the feature is available in U.S. East regions, U.S. West Oregon, several Asia-Pacific regions, Canada Central, multiple Europe regions and South America São Paulo. Enterprises with strict residency or regional architecture requirements still need to check whether the service is available where their workload runs.

The feature also does not eliminate human review. Teams still need to decide which examples represent real users, which metrics matter, how to weigh cost against accuracy and whether a prompt change introduces safety or compliance issues. In regulated or high stakes settings, an optimized prompt should be treated as a candidate for testing, not as an automatic production replacement.

The Bottom Line

AWS's Bedrock launch shows how the enterprise AI fight is moving from model access to model operations. Advanced Prompt Optimization gives customers a managed way to compare prompt behavior across as many as five models, score results with customer defined metrics and review cost and latency before migration.

That does not make prompt engineering disappear. It changes where the work happens. Instead of manually rewriting prompts and hoping a new model behaves the same way, developers can place prompt migration inside an evaluation workflow. If the tool performs as AWS describes, it makes one hidden cost of model competition more visible: the cost of proving that a different model can run the same application without breaking it.