U.S. AI Center Gets Early Access To Frontier Models

New CAISI agreements give federal testers pre-release access to Google DeepMind, Microsoft and xAI systems for national-security evaluation.

WASHINGTON, D.C. - The federal government's AI testing center said Tuesday it has signed expanded agreements with Google DeepMind, Microsoft and xAI that let U.S. evaluators assess frontier models before the public sees them.

The Center for AI Standards and Innovation, housed at the Commerce Department's National Institute of Standards and Technology, said the agreements cover pre-deployment evaluations, post-deployment assessments and targeted research into frontier AI capabilities. The practical change is access. Federal testers can examine unreleased systems, and NIST said developers frequently provide versions with reduced or removed safeguards so evaluators can test national-security risks directly.

That makes the announcement more than another voluntary safety pledge. It gives the U.S. government a formal path to probe systems that may matter for cybersecurity, biosecurity, critical infrastructure and military planning before those systems enter broad use.

The Story So Far

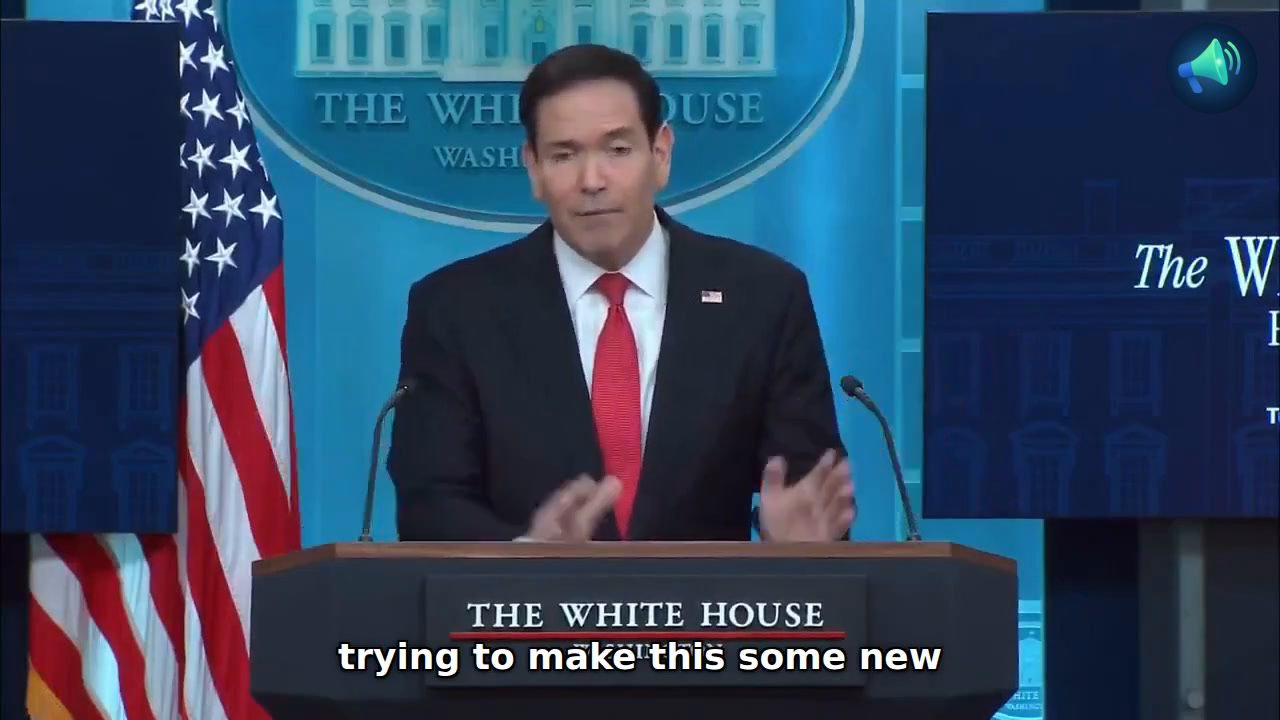

CAISI is the Trump administration's successor to the U.S. AI Safety Institute, which Commerce Secretary Howard Lutnick re-established in June 2025 under NIST. NIST said Tuesday that CAISI has been designated as the AI industry's primary U.S. government contact for testing, collaborative research and best-practice development tied to commercial AI systems.

The center's agreements with Google DeepMind, Microsoft and xAI build on earlier AI safety research agreements that NIST announced in 2024. NIST said the new agreements were renegotiated to reflect the secretary's directives and the White House AI Action Plan, which lists frontier-model national-security evaluation under its international AI diplomacy and security pillar.

The older safety conversation often centered on benchmark scores, lab-written system cards and public commitments. CAISI's new role is narrower and more operational. NIST said the center has completed more than 40 evaluations to date, including evaluations of state-of-the-art models that remain unreleased.

What's Happening Now

CAISI said its agreements enable government evaluation of models before public availability, plus post-deployment assessment and other research. NIST said the agreements support information-sharing, voluntary product improvements and a clearer government view of AI capabilities and international competition.

The key technical detail is the test configuration. NIST said developers frequently provide CAISI with models that have reduced or removed safeguards to evaluate national-security capabilities and risks. A safeguard-reduced model is not necessarily the product that users will see. It is closer to a stress test of the underlying model, designed to expose what the system may be capable of if guardrails fail, are bypassed or are intentionally stripped away.

Microsoft separately said its new U.S. agreement with CAISI will focus on adversarial assessment methods. The company said Microsoft and NIST will work on shared frameworks, datasets and workflows for testing advanced systems against safety, security and reliability risks.

Microsoft compared the work to stress-testing safety equipment in cars. The company's post said the assessments are meant to probe unexpected behaviors, misuse pathways and failure modes before those weaknesses show up in deployed products.

NIST said evaluators from across the government can participate through the Testing Risks of AI for National Security Taskforce, known as TRAINS. The taskforce brings in expertise from agencies that handle defense, energy, homeland security, cyber, health and intelligence issues.

The TRAINS structure matters because frontier-model risk is not one problem. A model that can assist with malware development raises a different question from a model that can help plan a biological experiment, design a chemical process or support military analysis. NIST's 2024 TRAINS announcement listed radiological and nuclear security, chemical and biological security, cybersecurity, critical infrastructure and conventional military capabilities among the taskforce's domains.

The Conservative View

The administration's policy frame treats the CAISI agreements as part of U.S. AI leadership. The White House AI Action Plan calls for accelerating AI innovation, building American AI infrastructure and leading in international AI diplomacy and security. Under that third pillar, the plan says the U.S. government should be at the forefront of evaluating national-security risks in frontier models.

Supporters of the voluntary-access model can point to speed and cooperation. By keeping the process centered on agreements with U.S. labs, CAISI can gain access to sensitive model details without building a broad pre-release licensing system that could slow American developers or push testing behind legal walls.

That view also fits the China-competition language in the Action Plan. The same pillar that names frontier-model risk evaluation also calls for countering Chinese influence in international governance bodies and aligning protection measures globally. In that frame, the national-security question is not only whether models are safe, but whether Washington or rival governments set the testing norms.

The Progressive View

Progressive AI-safety advocates are likely to focus on what the announcement does not do. NIST described the agreements as collaborative and voluntary, and the announcement does not say CAISI can block a model release, require changes or publish full evaluation results before launch.

That distinction matters for accountability. Pre-deployment access can give federal experts a sharper view of frontier capabilities, but NIST's Tuesday announcement framed developer changes as voluntary product improvements. The agreements may reduce the government's blind spots without creating a mandatory approval regime.

Safety-focused critics can still argue, based on NIST's own language, that reduced-safeguard testing shows why independent access is needed. If a model can do dangerous work once guardrails are removed, the public-policy question becomes how much confidence regulators should place in safeguards, monitoring and use policies after deployment.

Other Perspectives

Libertarian and open-source advocates may see a different risk. If frontier evaluations become concentrated inside classified environments and private agreements, the public may get fewer details about what was tested, which failure modes were found and how government officials define unacceptable capability.

Industry has its own incentive to cooperate. Microsoft said national-security and large-scale public-safety testing requires government expertise that companies do not hold by themselves. For a frontier lab, CAISI access can also create an early warning channel before a model launch creates political, security or reputational damage.

Internationally, Microsoft's announcement places the U.S. agreement beside a separate agreement with the United Kingdom's AI Security Institute. That matters because frontier AI companies deploy products across borders, while governments are trying to build national testing capacity without fully separating from one another.

Economic Implications

The immediate market effect is limited because the CAISI agreements do not impose a fee, a launch ban or a new public compliance rule. The economic importance is in the release process for frontier models. Pre-deployment testing can add friction for labs if government review expands, but it can also reduce costly failures if evaluators find security weaknesses before launch.

For Microsoft, the agreement connects directly to product risk. The company said it will apply lessons from the partnerships to how it designs, tests and deploys AI systems. That matters because Microsoft sells AI tools through enterprise software, cloud infrastructure and developer platforms, where a major safety or security incident could affect customer trust and procurement.

The broader industry tradeoff is access versus burden. Voluntary agreements may keep U.S. labs close to federal evaluators while avoiding a European-style compliance model. But if voluntary testing becomes the main policy tool, the strength of the system depends on whether the largest labs keep cooperating as model capabilities and commercial stakes rise.

By the Numbers

- 3 companies are named in the new CAISI agreements: Google DeepMind, Microsoft and xAI, according to NIST.

- More than 40 evaluations have been completed by CAISI, including evaluations of unreleased state-of-the-art models, according to NIST.

- More than 10 federal agencies now participate in TRAINS as of May 2026, according to NIST's updated taskforce page.

- 5 risk domains named by NIST include radiological and nuclear security, chemical and biological security, cybersecurity, critical infrastructure and conventional military capabilities.

What People Are Saying

"Independent, rigorous measurement science is essential to understanding frontier AI and its national security implications." - Chris Fall, CAISI director, according to NIST.

"CAISI's agreements with frontier AI developers enable government evaluation of AI models before they are publicly available, as well as post-deployment assessment and other research." - NIST announcement, May 5, 2026.

"To thoroughly evaluate national security-related capabilities and risks, developers frequently provide CAISI with models that have reduced or removed safeguards." - NIST announcement, May 5, 2026.

"Testing for national security and large-scale public safety risks necessarily must be a collaborative endeavor with governments." - Microsoft, May 5, 2026.

"The Taskforce will enable coordinated research and testing of advanced AI models across critical national security and public safety domains, such as radiological and nuclear security, chemical and biological security, cybersecurity, critical infrastructure, conventional military capabilities, and more." - NIST TRAINS announcement, November 20, 2024.

The Big Picture

The CAISI agreements move frontier AI evaluation from public benchmark culture toward earlier, more technical government access. NIST's announcement does not say the government will certify models before release, but it does say federal evaluators can test unreleased systems and reduced-safeguard versions in national-security contexts.

The next signal will be how much of that testing becomes visible outside government and the labs. If CAISI publishes methods, broad findings or testing standards, the agreements could shape the wider evaluation market. If most of the work remains classified or confidential, the public may see the policy outcome only when companies change launch plans, safeguards or disclosure practices.